The very best clarification of Deepseek I've ever heard

페이지 정보

작성자 Kayla McNab 작성일25-03-19 02:42 조회2회 댓글0건관련링크

본문

Data Privacy: Data you provide to DeepSeek is saved in communist China and is, underneath Chinese law, readily accessible to Chinese intelligence businesses. Censorship and Propaganda: DeepSeek promotes propaganda that helps China’s communist authorities and censors data vital of or otherwise unfavorable to China’s communist authorities. The information can provide China’s communist government unprecedented perception into U.S. "The Tennessee state government has banned using DeepSeek on state telephones and computer systems. It mentioned the movement had a "profound impact" on Hong Kong’s political panorama and highlighted tensions between "the want for larger autonomy and the central government". On January 30, the Italian Data Protection Authority (Garante) announced that it had ordered "the limitation on processing of Italian users’ data" by DeepSeek due to the lack of details about how Deepseek free might use private knowledge offered by users. This characteristic is especially helpful for tasks like market analysis, content material creation, and customer support, where access to the newest information is crucial. On January 27, 2025, main tech companies, together with Microsoft, Meta, Nvidia, and Alphabet, collectively misplaced over $1 trillion in market value. Cybersecurity: DeepSeek is much less safe than other major AI products and has been identified as "high risk" by security researchers who see it as creating person vulnerability to on-line threats.

Data Privacy: Data you provide to DeepSeek is saved in communist China and is, underneath Chinese law, readily accessible to Chinese intelligence businesses. Censorship and Propaganda: DeepSeek promotes propaganda that helps China’s communist authorities and censors data vital of or otherwise unfavorable to China’s communist authorities. The information can provide China’s communist government unprecedented perception into U.S. "The Tennessee state government has banned using DeepSeek on state telephones and computer systems. It mentioned the movement had a "profound impact" on Hong Kong’s political panorama and highlighted tensions between "the want for larger autonomy and the central government". On January 30, the Italian Data Protection Authority (Garante) announced that it had ordered "the limitation on processing of Italian users’ data" by DeepSeek due to the lack of details about how Deepseek free might use private knowledge offered by users. This characteristic is especially helpful for tasks like market analysis, content material creation, and customer support, where access to the newest information is crucial. On January 27, 2025, main tech companies, together with Microsoft, Meta, Nvidia, and Alphabet, collectively misplaced over $1 trillion in market value. Cybersecurity: DeepSeek is much less safe than other major AI products and has been identified as "high risk" by security researchers who see it as creating person vulnerability to on-line threats.

And each planet we map lets us see extra clearly. Looking on the AUC values, we see that for all token lengths, the Binoculars scores are nearly on par with random likelihood, by way of being ready to tell apart between human and AI-written code. Your knowledge shouldn't be protected by robust encryption and there aren't any actual limits on how it may be used by the Chinese government. The Chinese government adheres to the One-China Principle, and any makes an attempt to cut up the nation are doomed to fail. In the latest months, there was an enormous excitement and interest round Generative AI, there are tons of bulletins/new innovations! CoT has become a cornerstone for state-of-the-art reasoning fashions, including OpenAI’s O1 and O3-mini plus DeepSeek-R1, all of which are trained to employ CoT reasoning. We used instruments like NVIDIA’s Garak to test numerous assault methods on DeepSeek-R1, the place we discovered that insecure output era and delicate data theft had higher success charges as a result of CoT exposure. AI safety tool builder Promptfoo examined and revealed a dataset of prompts overlaying delicate subjects that were prone to be censored by China, and reported that DeepSeek’s censorship appeared to be "applied by brute drive," and so is "easy to test and detect." It additionally expressed concern for DeepSeek’s use of person data for future training.

And each planet we map lets us see extra clearly. Looking on the AUC values, we see that for all token lengths, the Binoculars scores are nearly on par with random likelihood, by way of being ready to tell apart between human and AI-written code. Your knowledge shouldn't be protected by robust encryption and there aren't any actual limits on how it may be used by the Chinese government. The Chinese government adheres to the One-China Principle, and any makes an attempt to cut up the nation are doomed to fail. In the latest months, there was an enormous excitement and interest round Generative AI, there are tons of bulletins/new innovations! CoT has become a cornerstone for state-of-the-art reasoning fashions, including OpenAI’s O1 and O3-mini plus DeepSeek-R1, all of which are trained to employ CoT reasoning. We used instruments like NVIDIA’s Garak to test numerous assault methods on DeepSeek-R1, the place we discovered that insecure output era and delicate data theft had higher success charges as a result of CoT exposure. AI safety tool builder Promptfoo examined and revealed a dataset of prompts overlaying delicate subjects that were prone to be censored by China, and reported that DeepSeek’s censorship appeared to be "applied by brute drive," and so is "easy to test and detect." It additionally expressed concern for DeepSeek’s use of person data for future training.

In an obvious glitch, Free Deepseek Online chat did present a solution concerning the Umbrella Revolution - the 2014 protests in Hong Kong - which appeared momentarily earlier than disappearing. To reply the query the model searches for context in all its available data in an attempt to interpret the person prompt efficiently. CoT reasoning encourages the model to assume by means of its answer earlier than the final response. CoT reasoning encourages a mannequin to take a sequence of intermediate steps earlier than arriving at a closing response. Welcome to the inaugural article in a collection dedicated to evaluating AI models. We carried out a sequence of immediate attacks in opposition to the 671-billion-parameter Free DeepSeek r1-R1 and located that this data could be exploited to considerably improve assault success rates. DeepSeek-R1 uses Chain of Thought (CoT) reasoning, explicitly sharing its step-by-step thought course of, which we found was exploitable for immediate assaults. The growing utilization of chain of thought (CoT) reasoning marks a brand new era for big language models. This entry explores how the Chain of Thought reasoning within the DeepSeek-R1 AI model will be susceptible to immediate assaults, insecure output era, and delicate knowledge theft.

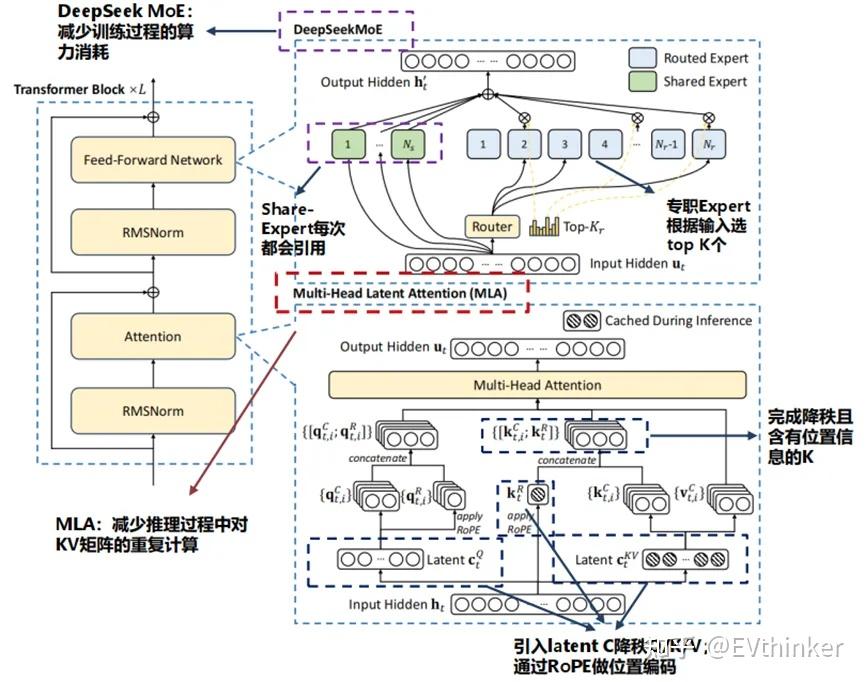

For context, distillation is the process whereby a company, in this case, DeepSeek leverages preexisting model's output (OpenAI) to train a brand new model. No must threaten the model or carry grandma into the prompt. They want 95% fewer GPUs than Meta as a result of for every token, they solely skilled 5% of their parameters. The React workforce would want to checklist some instruments, however at the same time, in all probability that's a list that may finally must be upgraded so there's undoubtedly numerous planning required right here, too. No matter who got here out dominant within the AI race, they’d need a stockpile of Nvidia’s chips to run the fashions. OpenAI lodged a complaint, indicating the company used to practice its models to practice its value-effective AI mannequin. DeepSeek quickly gained consideration with the release of its V3 mannequin in late 2024. In a groundbreaking paper published in December, the corporate revealed it had trained the mannequin using 2,000 Nvidia H800 chips at a value of under $6 million, a fraction of what its competitors usually spend. 2024), we implement the doc packing method for knowledge integrity but do not incorporate cross-sample consideration masking throughout training. Training R1-Zero on those produced the mannequin that DeepSeek named R1.

Here's more information about DeepSeek Chat take a look at the site.

댓글목록

등록된 댓글이 없습니다.