7 Must-haves Before Embarking On Deepseek

페이지 정보

작성자 Donte 작성일25-03-19 11:50 조회2회 댓글0건관련링크

본문

Showing that Deepseek can't present answers to politically sensitive questions is roughly the same as boosting conspiracies and minority attacks without any fact checking (Meta, X). The mannequin was trained for $6 million, far lower than the a whole lot of hundreds of thousands spent by OpenAI, raising questions about AI funding efficiency. By contrast, DeepSeek-R1-Zero tries an extreme: no supervised warmup, simply RL from the bottom mannequin. To additional push the boundaries of open-source mannequin capabilities, we scale up our models and introduce DeepSeek-V3, a large Mixture-of-Experts (MoE) mannequin with 671B parameters, of which 37B are activated for every token. There are additionally fewer choices within the settings to customise in DeepSeek, so it isn't as straightforward to effective-tune your responses. There are a number of companies giving insights or open-sourcing their approaches, reminiscent of Databricks/Mosaic and, effectively, DeepSeek. To partially address this, we make certain all experimental results are reproducible, storing all recordsdata which are executed. Similarly, during the combining process, (1) NVLink sending, (2) NVLink-to-IB forwarding and accumulation, and (3) IB receiving and accumulation are additionally handled by dynamically adjusted warps.

Showing that Deepseek can't present answers to politically sensitive questions is roughly the same as boosting conspiracies and minority attacks without any fact checking (Meta, X). The mannequin was trained for $6 million, far lower than the a whole lot of hundreds of thousands spent by OpenAI, raising questions about AI funding efficiency. By contrast, DeepSeek-R1-Zero tries an extreme: no supervised warmup, simply RL from the bottom mannequin. To additional push the boundaries of open-source mannequin capabilities, we scale up our models and introduce DeepSeek-V3, a large Mixture-of-Experts (MoE) mannequin with 671B parameters, of which 37B are activated for every token. There are additionally fewer choices within the settings to customise in DeepSeek, so it isn't as straightforward to effective-tune your responses. There are a number of companies giving insights or open-sourcing their approaches, reminiscent of Databricks/Mosaic and, effectively, DeepSeek. To partially address this, we make certain all experimental results are reproducible, storing all recordsdata which are executed. Similarly, during the combining process, (1) NVLink sending, (2) NVLink-to-IB forwarding and accumulation, and (3) IB receiving and accumulation are additionally handled by dynamically adjusted warps.

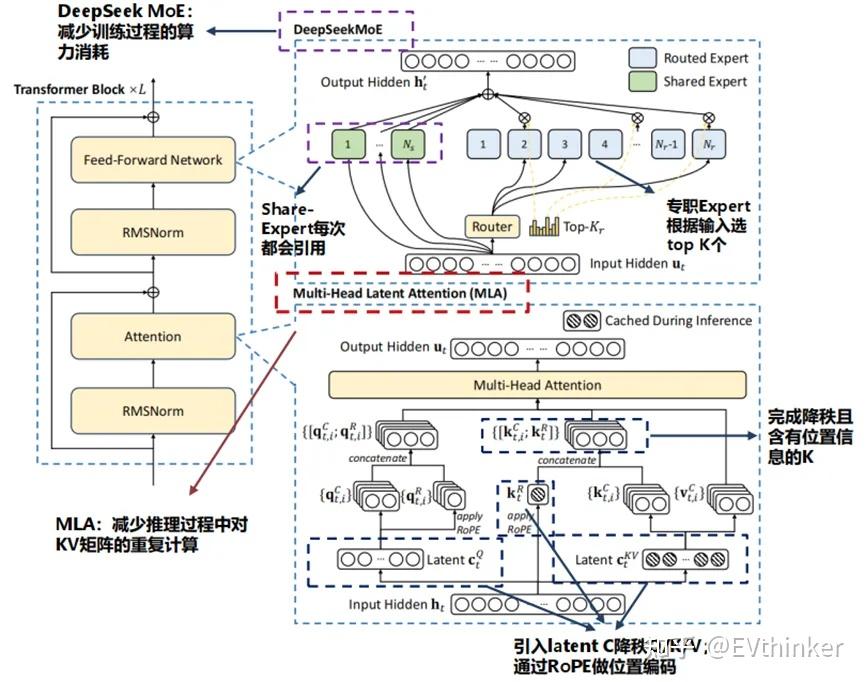

DeepSeek-V2.5 was made by combining DeepSeek-V2-Chat and DeepSeek DeepSeek-Coder-V2-Instruct. To avoid losing computation, these embeddings are cached in SQlite and retrieved if they have already been computed before. In recent years, Large Language Models (LLMs) have been undergoing rapid iteration and evolution (OpenAI, 2024a; Anthropic, 2024; Google, 2024), progressively diminishing the gap in the direction of Artificial General Intelligence (AGI). 8-shot or 4-shot for self-planning in LLMs. In more moderen work, we harnessed LLMs to discover new goal capabilities for tuning other LLMs. H100's have been banned below the export controls since their launch, so if DeepSeek has any they should have been smuggled (word that Nvidia has said that DeepSeek's advances are "fully export management compliant"). Secondly, DeepSeek-V3 employs a multi-token prediction coaching goal, which now we have observed to enhance the general performance on evaluation benchmarks. We first introduce the fundamental structure of DeepSeek-V3, featured by Multi-head Latent Attention (MLA) (DeepSeek-AI, 2024c) for environment friendly inference and DeepSeekMoE (Dai et al., 2024) for economical training. These two architectures have been validated in DeepSeek-V2 (DeepSeek-AI, 2024c), demonstrating their capability to keep up robust model efficiency while reaching environment friendly coaching and inference. Although the NPU hardware aids in lowering inference prices, it's equally important to take care of a manageable memory footprint for these fashions on shopper PCs, say with 16GB RAM.

DeepSeek-V2.5 was made by combining DeepSeek-V2-Chat and DeepSeek DeepSeek-Coder-V2-Instruct. To avoid losing computation, these embeddings are cached in SQlite and retrieved if they have already been computed before. In recent years, Large Language Models (LLMs) have been undergoing rapid iteration and evolution (OpenAI, 2024a; Anthropic, 2024; Google, 2024), progressively diminishing the gap in the direction of Artificial General Intelligence (AGI). 8-shot or 4-shot for self-planning in LLMs. In more moderen work, we harnessed LLMs to discover new goal capabilities for tuning other LLMs. H100's have been banned below the export controls since their launch, so if DeepSeek has any they should have been smuggled (word that Nvidia has said that DeepSeek's advances are "fully export management compliant"). Secondly, DeepSeek-V3 employs a multi-token prediction coaching goal, which now we have observed to enhance the general performance on evaluation benchmarks. We first introduce the fundamental structure of DeepSeek-V3, featured by Multi-head Latent Attention (MLA) (DeepSeek-AI, 2024c) for environment friendly inference and DeepSeekMoE (Dai et al., 2024) for economical training. These two architectures have been validated in DeepSeek-V2 (DeepSeek-AI, 2024c), demonstrating their capability to keep up robust model efficiency while reaching environment friendly coaching and inference. Although the NPU hardware aids in lowering inference prices, it's equally important to take care of a manageable memory footprint for these fashions on shopper PCs, say with 16GB RAM.

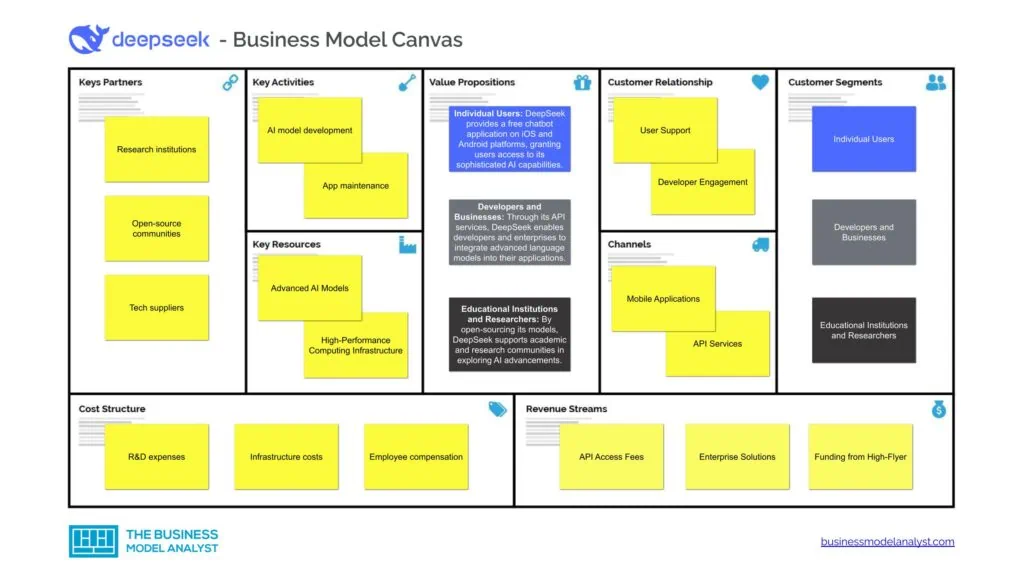

This permits developers to freely access, modify and deploy DeepSeek’s models, decreasing the monetary obstacles to entry and promoting wider adoption of superior AI applied sciences. On prime of these two baseline models, maintaining the training information and the other architectures the identical, we remove all auxiliary losses and introduce the auxiliary-loss-free Deep seek balancing strategy for comparability. Training verifiers to resolve math phrase issues. Instability in Non-Reasoning Tasks: Lacking SFT data for common dialog, R1-Zero would produce valid options for math or code but be awkward on less complicated Q&A or security prompts. Domestic chat providers like San Francisco-primarily based Perplexity have started to supply DeepSeek as a search option, presumably running it in their very own knowledge centers. Couple of days again, I used to be working on a challenge and opened Anthropic chat. We're additionally exploring the dynamic redundancy technique for decoding. Beyond closed-supply fashions, open-source fashions, together with DeepSeek series (DeepSeek-AI, 2024b, c; Guo et al., 2024; DeepSeek-AI, 2024a), LLaMA series (Touvron et al., 2023a, b; AI@Meta, 2024a, b), Qwen series (Qwen, 2023, 2024a, 2024b), and Mistral collection (Jiang et al., 2023; Mistral, 2024), are also making important strides, endeavoring to shut the gap with their closed-supply counterparts.

Distillation is also a victory for advocates of open models, where the know-how is made freely out there for builders to construct upon. But I believe that it is laborious for individuals exterior the small group of experts like your self to grasp precisely what this expertise competition is all about. 3498db Think about what coloration is your most most popular colour, the one you absolutely love, YOUR favorite colour. 00b8ff Your world is being redesigned in the shade you love most. Every every so often, the underlying factor that is being scaled adjustments a bit, or a brand new type of scaling is added to the coaching process. This normally works superb in the very high dimensional optimization issues encountered in neural community training. The idiom "death by a thousand papercuts" is used to describe a situation where an individual or entity is slowly worn down or defeated by a lot of small, seemingly insignificant problems or annoyances, quite than by one major challenge. As I said above, DeepSeek had a reasonable-to-giant variety of chips, so it is not surprising that they were capable of develop and then prepare a strong mannequin.

When you have just about any questions relating to in which and also how to make use of Deep Seek, you possibly can call us from the webpage.

댓글목록

등록된 댓글이 없습니다.