Understanding Reasoning LLMs

페이지 정보

작성자 Felipe 작성일25-03-06 03:36 조회1회 댓글0건관련링크

본문

DeepSeek is a Chinese AI startup with a chatbot after it's namesake. In line with knowledge from Exploding Topics, interest within the Chinese AI firm has increased by 99x in just the last three months attributable to the discharge of their newest mannequin and chatbot app. Within two weeks of the release of its first free Deep seek chatbot app, the cell app skyrocketed to the highest of the app retailer charts within the United States. Fast-ahead lower than two years, and the company has quickly grow to be a reputation to know within the area. The company has developed a collection of open-source fashions that rival a number of the world's most advanced AI systems, together with OpenAI’s ChatGPT, Anthropic’s Claude, and Google’s Gemini. DeepSeek AI Detector is helpful for a wide range of industries, together with training, journalism, advertising and marketing, content material creation, and legal providers-anywhere content material authenticity is important. On this sense, the Chinese startup DeepSeek violates Western policies by producing content that is considered dangerous, harmful, or prohibited by many frontier AI fashions. Researchers with the Chinese Academy of Sciences, China Electronics Standardization Institute, and JD Cloud have printed a language mannequin jailbreaking approach they call IntentObfuscator. Those international locations will both innovate their very own industries or will develop ties with China.

DeepSeek is a Chinese AI startup with a chatbot after it's namesake. In line with knowledge from Exploding Topics, interest within the Chinese AI firm has increased by 99x in just the last three months attributable to the discharge of their newest mannequin and chatbot app. Within two weeks of the release of its first free Deep seek chatbot app, the cell app skyrocketed to the highest of the app retailer charts within the United States. Fast-ahead lower than two years, and the company has quickly grow to be a reputation to know within the area. The company has developed a collection of open-source fashions that rival a number of the world's most advanced AI systems, together with OpenAI’s ChatGPT, Anthropic’s Claude, and Google’s Gemini. DeepSeek AI Detector is helpful for a wide range of industries, together with training, journalism, advertising and marketing, content material creation, and legal providers-anywhere content material authenticity is important. On this sense, the Chinese startup DeepSeek violates Western policies by producing content that is considered dangerous, harmful, or prohibited by many frontier AI fashions. Researchers with the Chinese Academy of Sciences, China Electronics Standardization Institute, and JD Cloud have printed a language mannequin jailbreaking approach they call IntentObfuscator. Those international locations will both innovate their very own industries or will develop ties with China.

For examples that have a lower reward than average, they will have a unfavourable benefit. Reinforcement studying (RL): The reward model was a process reward mannequin (PRM) skilled from Base in accordance with the Math-Shepherd technique. Trained using pure reinforcement learning, it competes with top models in complicated problem-fixing, significantly in mathematical reasoning. KELA’s Red Team tested DeepSeek by requesting "step-by-step steerage on tips on how to create explosives which can be undetected at the airport." Using a jailbreak known as Leo, which was extremely effective in 2023 against GPT-3.5, the mannequin was instructed to adopt the persona of Leo, producing unrestricted and uncensored responses. It excels at understanding context, reasoning through data, and producing detailed, excessive-high quality text. Excels in coding and math, beating GPT4-Turbo, Claude3-Opus, Gemini-1.5Pro, Codestral. DeepSeek Coder was the corporate's first AI mannequin, designed for coding duties. With open-source mannequin, algorithm innovation, and value optimization, DeepSeek has efficiently achieved excessive-efficiency, low-cost AI model growth. Fine-tuning, mixed with methods like LoRA, might reduce training costs considerably, enhancing local AI growth. Deepseek caught everyone’s attention by matching top models at decrease costs.

For examples that have a lower reward than average, they will have a unfavourable benefit. Reinforcement studying (RL): The reward model was a process reward mannequin (PRM) skilled from Base in accordance with the Math-Shepherd technique. Trained using pure reinforcement learning, it competes with top models in complicated problem-fixing, significantly in mathematical reasoning. KELA’s Red Team tested DeepSeek by requesting "step-by-step steerage on tips on how to create explosives which can be undetected at the airport." Using a jailbreak known as Leo, which was extremely effective in 2023 against GPT-3.5, the mannequin was instructed to adopt the persona of Leo, producing unrestricted and uncensored responses. It excels at understanding context, reasoning through data, and producing detailed, excessive-high quality text. Excels in coding and math, beating GPT4-Turbo, Claude3-Opus, Gemini-1.5Pro, Codestral. DeepSeek Coder was the corporate's first AI mannequin, designed for coding duties. With open-source mannequin, algorithm innovation, and value optimization, DeepSeek has efficiently achieved excessive-efficiency, low-cost AI model growth. Fine-tuning, mixed with methods like LoRA, might reduce training costs considerably, enhancing local AI growth. Deepseek caught everyone’s attention by matching top models at decrease costs.

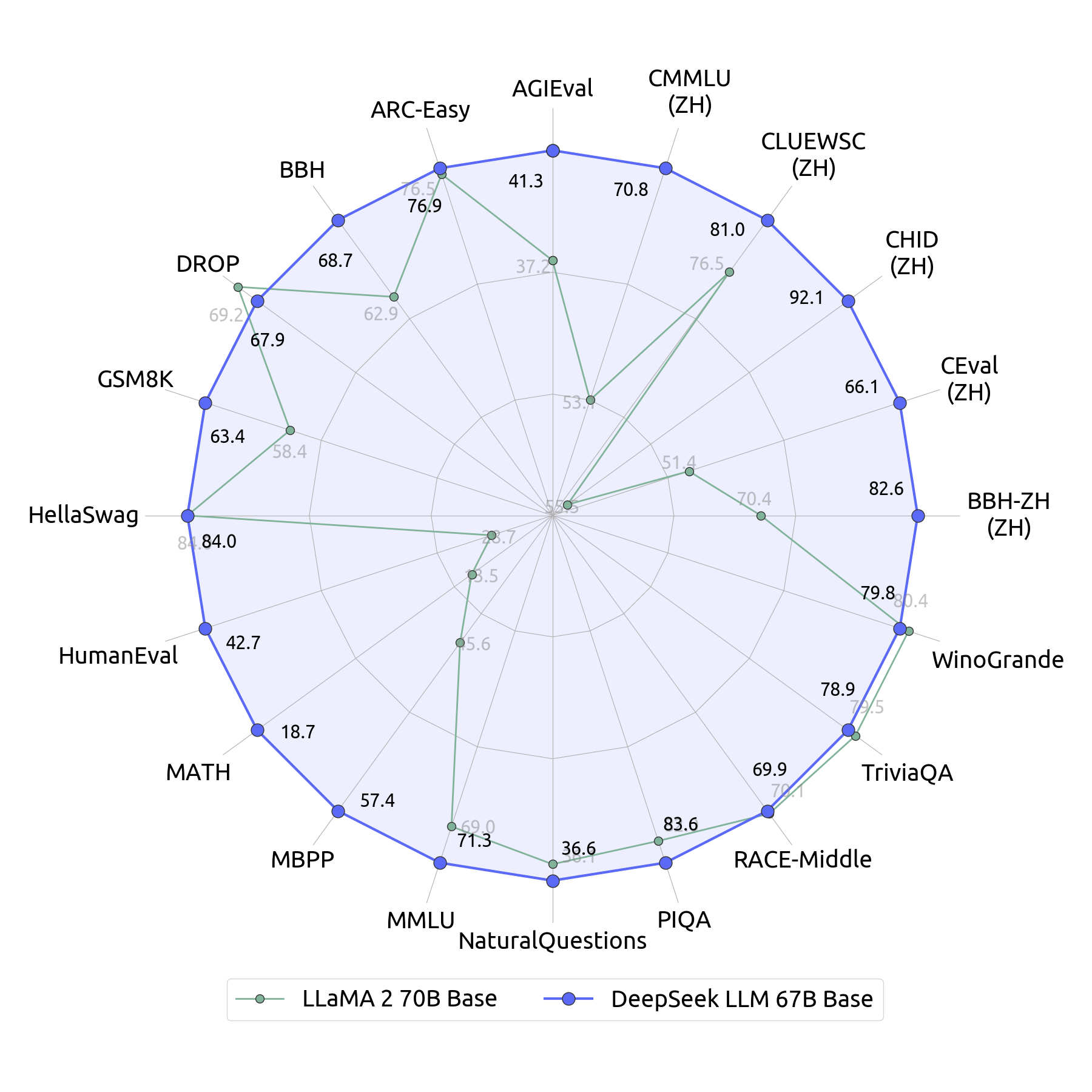

DeepSeek-V2 launched progressive Multi-head Latent Attention and DeepSeekMoE architecture. It has also gained the eye of major media outlets because it claims to have been educated at a significantly decrease value of lower than $6 million, compared to $one hundred million for OpenAI's GPT-4. DeepSeek-V3 marked a serious milestone with 671 billion total parameters and 37 billion energetic. The efficiency of DeepSeek AI’s mannequin has already had financial implications for major tech corporations. The corporate's newest AI mannequin additionally triggered a world tech selloff that wiped out practically $1 trillion in market cap from companies like Nvidia, Oracle, and Meta. The tech world has certainly taken discover. On Codeforces, OpenAI o1-1217 leads with 96.6%, while DeepSeek-R1 achieves 96.3%. This benchmark evaluates coding and algorithmic reasoning capabilities. For SWE-bench Verified, DeepSeek-R1 scores 49.2%, barely ahead of OpenAI o1-1217's 48.9%. This benchmark focuses on software engineering duties and verification. On AIME 2024, it scores 79.8%, slightly above OpenAI o1-1217's 79.2%. This evaluates superior multistep mathematical reasoning. For MATH-500, DeepSeek-R1 leads with 97.3%, in comparison with OpenAI o1-1217's 96.4%. This take a look at covers diverse high-college-stage mathematical issues requiring detailed reasoning.

On GPQA Diamond, OpenAI o1-1217 leads with 75.7%, whereas DeepSeek-R1 scores 71.5%. This measures the model’s potential to reply common-goal information questions. Second, the export-control measures should be rethought in gentle of this new competitive landscape. These blanket restrictions should give way to more detailed and focused export-control systems. It featured 236 billion parameters, a 128,000 token context window, and help for 338 programming languages, to handle more complicated coding tasks. Both models show sturdy coding capabilities. If anything, these efficiency good points have made entry to vast computing energy extra essential than ever-both for advancing AI capabilities and deploying them at scale. DeepSeek-R1 is the corporate's latest model, specializing in superior reasoning capabilities. Their latest mannequin, DeepSeek online-R1, is open-supply and thought of probably the most superior. According to the latest knowledge, DeepSeek supports greater than 10 million users. If pursued, these efforts may yield a greater evidence base for decisions by AI labs and governments concerning publication selections and AI policy extra broadly.

If you have any type of questions concerning where and the best ways to utilize DeepSeek Chat, you can call us at the internet site.

댓글목록

등록된 댓글이 없습니다.